Part 3: Conducting a Data Quality Assessment

We are continuing with our deep dive into different aspects of conducting a data quality assessment. Part 3 will focus on selecting the appropriate tools for the job. If you missed Part 1 on how to set goals and objectives or Part 2, which describes the process of assessing your current state and how to measure progress, click on the links provided here to check those out. In this article, we will review how to choose the right data quality tools, how to interpret their results and understand their limitations. This, in fact, is a good place to start our discussion, because a common mistake is to believe that a specific tool will serve as a “silver bullet” to solving fundamentally flawed processes.

Understanding the Limitations of Data Quality Tools

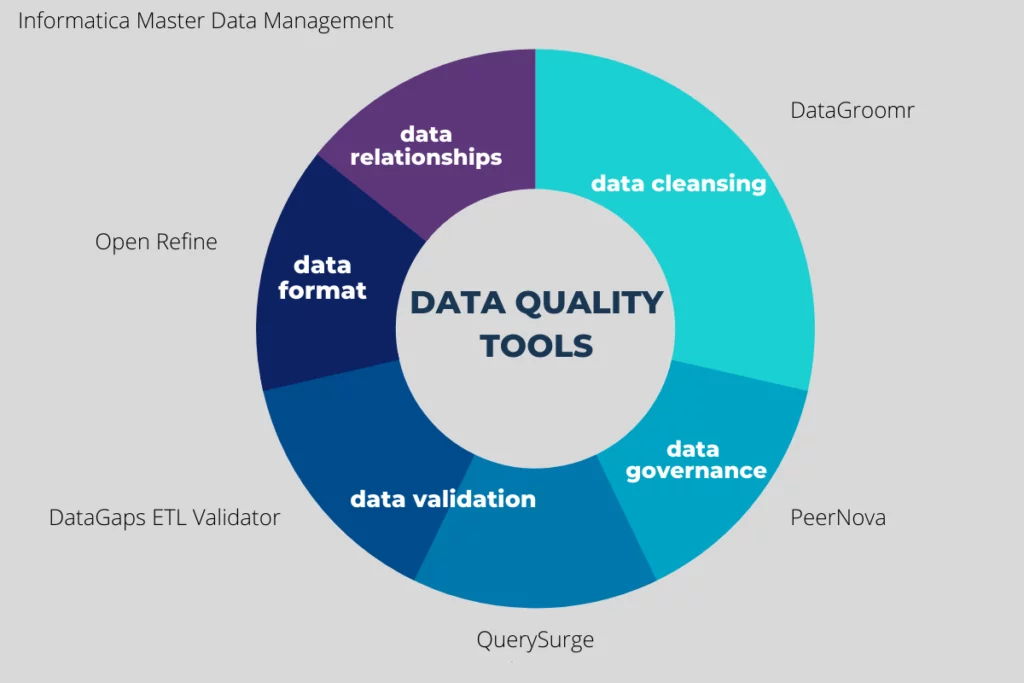

Data quality tools will usually focus on four aspects: data cleansing, data integration, master data management, and metadata management. They will use various algorithms and lookup tables to help you identify errors and anomalies. These tools tend to be on the complex side, but the tradeoff is that a single tool can help you accomplish several different tasks. For example, there are tools that can both validate the contact information for all of your leads and prospects and also help you with data mapping, reconciliation, sample testing, and many other things.

Regardless of how much a tool costs or the sales pitch behind it, there is no such thing as a magic wand that will fix a broken data framework. A data framework includes how your company currently manages and stores its data, workflows, or processes. Pay specific attention to the intended use case for each tool. Some are designed to be used with an application like Salesforce or SAP, while others only detect specific errors, such as bad email or physical addresses. Finally, be sure to understand the level of automation that each tool offers. If your organization has millions of records, you need a tool that can process large amounts of data very quickly and deliver a big picture overview. On the other hand, if you are focused on a small subset of critical data, a tool may be used to both detect and fix issues.

Now that we understand the limitations that can be expected with data quality software, let’s start looking at some of the tools that will be useful at each stage of the data quality assessment.

Data Cleansing Tools

Data cleansing is the process of fixing or removing incorrect, corrupted, incorrectly formatted, duplicate or incomplete data within a data set. The first step towards cleansing your data is to remove duplicates, an area that most organizations struggle with. If you have many different sources for data (a very common scenario), each of these is a potential gateway for duplicate creation. DataGroomr is one application that can help you identify and remove duplicates from your Salesforce environment. It provides an instant analysis of the problem as part of a free trial. You can then eliminate discovered duplicates through merges and prevent future ones that may be introduced through imports.

If you are looking to clean up messy data or transform from one format to another, we recommend using a tool like OpenRefine (previously known as Google Refine). It is an open-source tool that can help you rename, filter, or even add specific data elements. The application can even interactively change large chunks of data to fit specific management requirements. This is very helpful when you are dealing with structural errors such as unusual naming conventions, typos, or incorrect capitalization. For example, a state may be identified by its name, such as “Pennsylvania”, or the abbreviation “PA”. For consistency, the solution may be converting to one value or the other and OpenRefine is good at this task.

Finally, many organizations choose to filter out unwanted outliers or “one offs” that (at least on the surface) do not fit within the data you are analyzing. There are many factors that need to be considered here. First, you need to decide whether an outlier is actually an invalid value. Sometimes, so-called outliers can provide important evidence. When referring to outliers within the context of a data quality analysis, we are talking about data that is irrelevant for analysis or exists due to errors such as improper data entry. Informatica Master Data Management is a great tool that can provide you with a view of all the relationships in your databases from every angle. That way, you will be able to clearly determine if a particular outlier is useful or is just a result of poor data management.

Data Validation Tools

Data validation tools are used to determine if the data you have is accurate, meets business requirements, and can be used as a basis for decision-making. It is important to note that each of these aspects will require its own set of tests. We recommend using a combination of tools such as the Datagaps ETL Validator, which can connect with some of the most popular data sources and repositories. It includes a robust set of features for ETL testing automation and is capable of extracting and comparing millions of records from multiple data sources and running simultaneous test cases.

Some tools will not work on both structured and unstructured data. If you have this scenario, we recommend a tool such as QuerySurge. It works with all types of data and automates validation and testing. It will also keep working to continuously detect data issues in the delivery pipeline, which makes it useful even after the data quality assessment is over.

Improving Data Timeliness

In last week’s article, we discussed that today you may effectively have as little as five minutes from the time a lead is submitted to qualify and contact them. This highlights the importance of accessing data as quickly as possible. Many organizations have legacy data collection and storage systems that, while providing a dependable repository, have barriers preventing the stakeholders from accessing the data efficiently and within the expected amount of time. There are a myriad of reasons for the existence of these siloes including regulatory compliance and corporate data governance rules and processes.

Regardless of which particular issues you will uncover over the course of the data quality assessment, implementing an effective, but timely, data governance model will help you solve a plethora of challenges throughout the enterprise. A very useful platform offered by PeerNova continuously runs data quality and timeliness checks of data across the enterprise. It contains features to identify bottlenecks and speed up the resolution time. Ultimately, organizations need to implement the right mix of data governance processes that meet their corporate and regulatory compliance needs while delivering timely data.

Staying on Top of Any Data Quality Issues

Once you have all of your data quality and information governance initiatives in place, there are tools available to support these initiatives. A very popular tool in this space is the IBM InfoSphere Quality Stage. It can help you maintain the health and quality of your data by preventing bad data from entering your systems. The tool allows users to define individual rules for what constitutes good quality data and then automatically scan any incoming data prior to it reaching any of your repositories. Similar products are also available from companies like Oracle, SAP, and others.

Trust DataGroomr to Handle the Data Cleansing Aspect of the Data Quality Assessment

We discussed a lot of tools that will be useful for each stage and aspect of the data quality assessment. When you reach the data cleansing stage, consider using DataGroomr to help you identify duplicates. As we mentioned earlier, we offer an instant data quality assessment as part of our free 14-day trial. We also simplify the data cleansing process by leveraging machine learning instead of the traditional rule-based approach. This allows you to avoid a complicated setup process because you will be able to simply install the product and start using it right away.

Try DataGroomr for yourself today with our free 14-day trial. DataGroomr will help you clean up your data, make it easier for your sales team to work with the data in Salesforce, and reduce the workload of your Salesforce admins as they will no longer need to waste time creating rules to catch every possible fuzzy duplicate!