Big tech companies understand that maintaining clean data, free of duplicates and inaccuracies, provides a competitive advantage. For businesses that want to level the playing field with big tech, they will need to manage their data more effectively and efficiently. The big players, like IBM, know that data quality empowers them to: 1) accurately target customers for cross-sell; 2) support data governance for improved ROI; and 3) consolidate applications and automate processes to reduce costs.

Dirty data is data that can be difficult to collect and process because of poor formatting, incoherent structures, and an abundance of duplicate records. Cleaning data on a consistent basis provides a direct line to successfully managing data quality issues and competing with the top tech companies in a particular industry. In this article, we will outline the value of a data management strategy and the most important steps to build that competitive edge.

To Improve Lead Generation and Conversion Efforts

On average, 35% of B2B data decays every year. It’s a cascading problem that contributes to dirty data reducing lead conversions at a cost of $83 per 100 records in the database. According to 44 percent of respondents in a survey by Validity, their company loses over 10% in annual revenue due to CRM data decay. Respondents estimated that their CRM data will continue to decay by 34% annually without significant improvements. As a result, dirty data impedes a company’s potential to reach significant returns on investment.

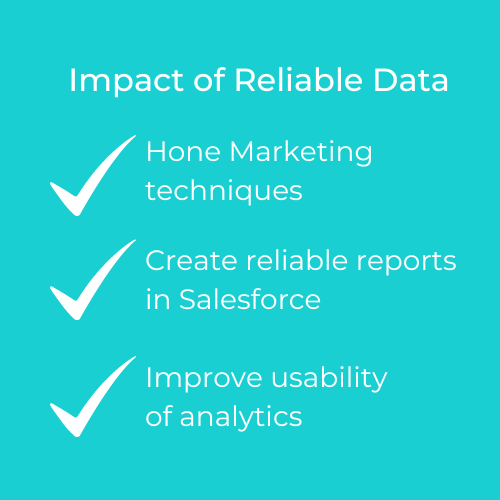

Regularly cleaning all of the data in Salesforce, for example, helps companies generate more actionable business insights. With a reliable and accurate database, a business can better hone their marketing techniques, create updates and reliable reports in Salesforce, and improve the usability of analytics.

To drive a large-scale sales strategy, any successful business needs clean data. Without access to clean data, there is no way to achieve dynamic, engaging, and personalized marketing. Personalized marketing, also known as one-to-one marketing or individual marketing, is a strategy that requires businesses to have the most up-to-date info about their customers. This includes things like keeping track of their most recent purchases, website activity, and even life developments like getting married and new additions to the family. The only way to maintain that level of visibility is through clean data.

They Don’t Let Dirty Data Steal Precious Resources

Research shows that the cost of bad data is somewhere between 15% to 25% of revenue for most companies. In addition to this, 6 out of 10 companies have no idea how much money they are losing every year as a result of their poor data quality. Perhaps the reason for this is that companies don’t have a grasp of the widespread effects dirty data is having on their organization and, therefore, don’t see the point of having a process to analyze and assess the damage it is causing.

The value of data is increasing every year for every type of business. The cost savings from maintaining an accurate database can offset the cost of investing in automation processes for cleaning data. There is additional revenue to be accessed by sales teams when they are harvesting from reliable data. One of the first steps to get data quality issues under control Is to conduct a data quality assessment, which will identify where dirty data may be draining resources. The next steps could include isolating the data corruption source and then automating data deduplication and validation.

To Improve Productivity Across Departments

Sales teams that are forced to deal with incomplete records will naturally experience a decrease in overall productivity since they will need to spend time searching for the right information. For example, a lead that has a missing extension number can cost a sales team member an extra five minutes of time to get to the right person.

For businesses that notice a decrease in productivity for one or more departments, it’s not too late to evaluate the root cause. Data plays a big part in business – sales teams rely on good data to make calls and close deals, marketing needs leads to be able to do their jobs, and accounting has to have proper information passed to them in order to bill accordingly. Since data touches so many departments, it is not uncommon to have data be the prime cause of productivity loss.

They Automate Data Cleansing to Be More Competitive

Data cleansing involves fixing or removing incorrect, corrupted, incorrectly formatted, duplicate, or incomplete data within a dataset. When combining multiple data sources, there are many opportunities for data to be duplicated or mislabeled. If data is incorrect, outcomes and algorithms are unreliable, even though they may look correct.

There is no one absolute way to prescribe the exact steps in the data cleaning process because the processes will vary from dataset to dataset. But it is crucial for businesses to establish a template for a data cleaning process in order to establish a reliable process for data management. Having clean data will ultimately increase overall productivity and allow for the highest quality information to inform executive decision-making. Benefits include:

- Removal of errors when multiple sources of data are at play

- Fewer errors leading to happier clients and less frustrated employees

- Ability to map the different functions of what data is intended to do

- Monitoring errors and better reporting to see where errors are coming from, making it easier to fix incorrect or corrupt data for future applications

- More efficient business practices and quicker decision-making.

Use Machine Learning to Clean and Manage Your Data

DataGroomr leverages machine learning to eliminate many of the time-consuming processes associated with rule-based deduplication tools. With DataGroomr’s algorithms, there are no rules to set up, nothing to download, and the system learns to catch new duplicates based on your decision to label records as duplicates (or not). This is called active learning, which means that the system will continue to modify the weights assigned to each field based on user interaction and consequently improve duplicate detection, becoming more efficient and accurate as it is used.

Get started with a free 14-day trial of DataGroomr.